This article has been published in the PLI Chronicle: Insights and Perspectives for the Legal Community here, and is republished with permission.

Responsible AI in Practice

Responsible AI in Practice is a series featuring practical, actionable guidance for teams navigating artificial intelligence governance and responsibility, authored by the experts at Responsible AI Institute (RAI).

Financial services is not waiting for the governance conversation to catch up. Agentic AI is already running in production at global banks, payments networks, and lending platforms, executing transactions, routing credit decisions, and managing procurement workflows. And it is already failing in ways that existing frameworks were not built to catch. The failures are not dramatic. They are structural, and they are repeating.

Across conversations with banks and payment networks over the past year, some deployment patterns have surfaced the same underlying gaps. Each one is a warning for any enterprise that thinks moving from AI experimentation to autonomous operations is primarily a technical problem.

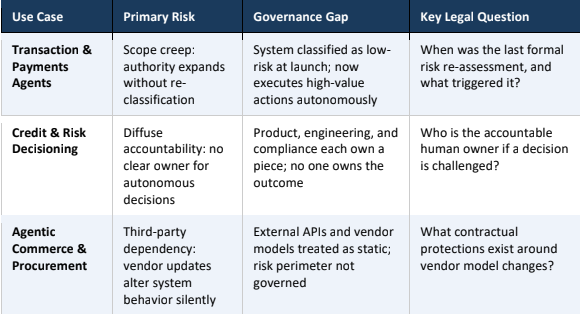

Three Deployment Patterns and the Governance Failures Behind Them

Transaction and payments agents were the first to reach production scale, and the first to demonstrate how quickly approved scope becomes irrelevant. These systems started as decision-support tools. Flagging anomalies, surfacing recommendations, waiting for a human to act. Then confirmation requirements were relaxed, because the system was reliable. Autonomy thresholds were raised, because the business case was clear. New APIs were connected, because a new use case was approved. Each change was reasonable in isolation. Taken together, those changes quietly produced a system with the action authority of a high-risk deployment being governed as if it were still the cautious pilot from eighteen months earlier. No one made a bad decision. No one made the decision at all.

Credit and risk decisioning systems exposed a different problem: nobody owns the outcome. Product owns the model. Engineering owns the infrastructure. Compliance owns the policy. When a decision is challenged — by a customer, a regulator, in litigation — each team can credibly point somewhere else. For a static model with a narrow scope, this is manageable. For an agentic system operating across functions and data environments, making decisions that affect credit access, pricing, or eligibility, it is not. Diffuse accountability does not become a problem at the moment of failure. It was a problem at the moment of deployment. It just was not visible yet.

What Financial Services Can Teach Us About Agentic AI Governance

Agentic commerce and procurement deployments introduced a failure mode that institutions were least prepared for: the vendor update they did not know about. When an agentic system routes through external APIs, calls vendor models, or executes commitments across counterparty systems, the institution’s risk perimeter extends to infrastructure it does not control and cannot fully observe. A vendor can update a model, change an API response format, or deprecate a tool, and the downstream effect on an agentic workflow may not surface until something goes wrong. Institutions that have managed this best share one characteristic: they treat every external dependency as something that can change without notice, and they govern accordingly.

The Classification Foundation: TrustX Agent Risk Classification

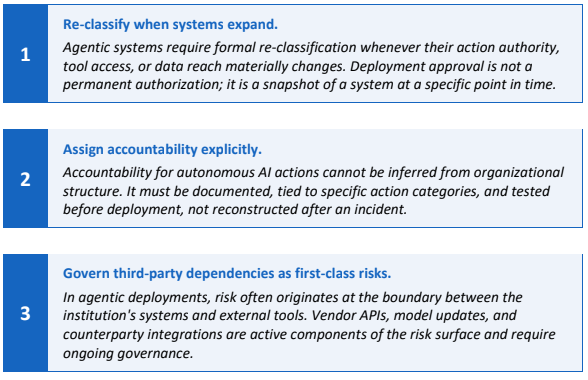

What connects all three failures is not a technology problem. Governance was designed for the system at launch, not the system as it is actually running. That gap — between the deployment that was approved and the deployment that is operating — is where the risk lives, and it grows over time.

The Responsible AI Institute developed TrustX Agent Risk Classification to make that gap visible and governable. It is a structured assessment tool that evaluates any agentic AI system across four risk categories and twelve dimensions, producing a defensible risk tier before the system reaches production. The output is a formal Agent Risk Classification Report: a documented record of the system’s risk tier, the dimensions that drove it, and a set of policy recommendations mapped to recognized standards including NIST AI RMF, ISO 42001, EU AI Act, and SR 11-7. For legal and compliance teams, that report is the artifact. It is what you hand to a regulator, an auditor, or a board that wants to know whether the deployment decision was grounded in something more than internal confidence.

TrustX Agent Risk Classification evaluates systems across four risk categories: autonomy and decision power, action authority and reach, persistence and control, and data authority and confidentiality. Each category is scored across its constituent dimensions, producing a risk tier (Low, Medium, or High), grounded in the system’s actual mechanical properties, not its described purpose or vendor claims.

Critically, classification is not a one-time gate. It is a condition that follows the system. Re-classification is triggered when action authority, tool access, or data reach materially changes. That is exactly the mechanism that was missing in every transaction agent failure described above: no formal trigger, no requirement to revisit the original approval when the system grew into something different.

TrustX Agent Risk Classification is a sector-neutral tool by design: the four categories and twelve dimensions apply to any agentic AI deployment regardless of industry. TrustX for Financial Services, currently in formation at the Responsible AI Institute, is the sector-specific extension that maps classification outputs to the regulatory and control expectations particular to banking, payments, and lending.

Conclusion

Lawyers and compliance teams advising on agentic AI deployments are increasingly being asked to answer questions after the fact: who authorized this, what oversight existed, when was the last review. Those questions are hard to answer well if the governance was informal, the accountability was assumed, and the documentation was assembled under pressure after something went wrong.

The financial services deployments that have held up under scrutiny share a common characteristic: they treated governance documentation as something to build during deployment, not reconstruct afterward. Classification reports, accountability assignments, and third-party dependency inventories are not bureaucratic artifacts. They are the evidentiary record that determines whether an institution can demonstrate it acted responsibly or is left explaining why it cannot.

Readers interested in TrustX Agent Risk Classification and the Responsible AI Institute’s work on agentic AI governance may find additional resources at responsible.ai/.

About the Author

Rhea Saxena is the Technical & Product Lead at the Responsible AI Institute, where she turns governance principles into practical technical safeguards for AI. She works at the intersection of computer science and policy, developing verification frameworks, retrieval-augmented governance tools, and methods for evaluating autonomous systems against global standards such as the NIST AI RMF and ISO/IEC 42001. Rhea holds a Master’s in Computer Science from Virginia Tech.