This article was published in the New York Times on December 8, 2021 and written by their editor, Steve Lohr.

It highlights and quotes RAII’s Executive Director, Ashley Casovan’s expertise in responsible AI.

The Responsible AI Institute does not claim ownership of this article.

New York Times- Artificial intelligence software is increasingly used by human resources departments to screen résumés, conduct video interviews and assess a job seeker’s mental agility.

Now, some of the largest corporations in America are joining an effort to prevent that technology from delivering biased results that could perpetuate or even worsen past discrimination.

The Data & Trust Alliance, announced on Wednesday, has signed up major employers across a variety of industries, including CVS Health, Deloitte, General Motors, Humana, IBM, Mastercard, Meta (Facebook’s parent company), Nike and Walmart.

The corporate group is not a lobbying organization or a think tank. Instead, it has developed an evaluation and scoring system for artificial intelligence software.

The Data & Trust Alliance, tapping corporate and outside experts, has devised a 55-question evaluation, which covers 13 topics, and a scoring system. The goal is to detect and combat algorithmic bias.

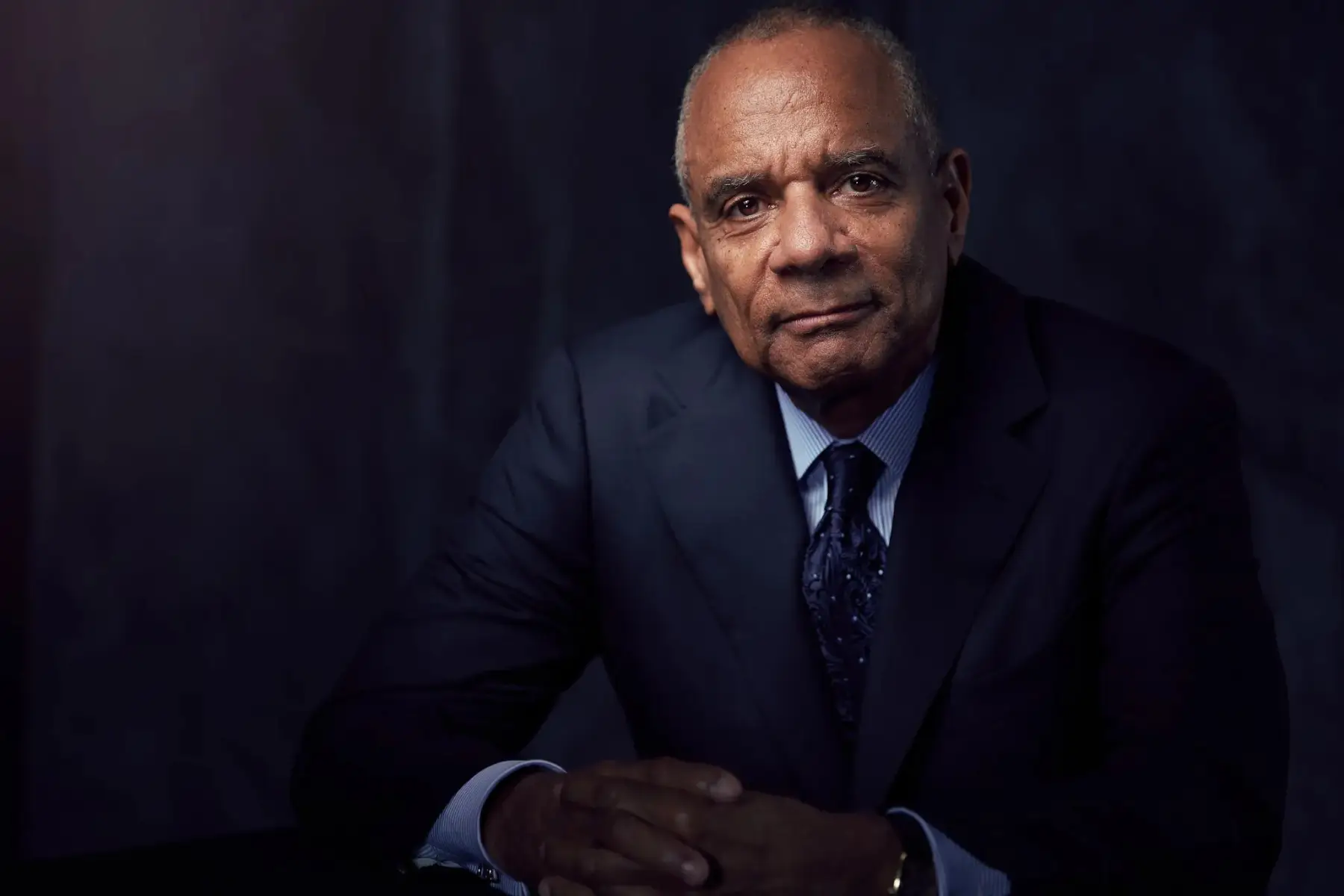

“This is not just adopting principles, but actually implementing something concrete,” said Kenneth Chenault, co-chairman of the group and a former chief executive of American Express, which has agreed to adopt the anti-bias tool kit.

The companies are responding to concerns, backed by an ample body of research, that A.I. programs can inadvertently produce biased results. Data is the fuel of modern A.I. software, so the data selected and how it is employed to make inferences are crucial.

If the data used to train an algorithm is largely information about white men, the results will most likely be biased against minorities or women. Or if the data used to predict success at a company is based on who has done well at the company in the past, the result may well be an algorithmically reinforced version of past bias.

Seemingly neutral data sets, when combined with others, can produce results that discriminate by race, gender or age. The group’s questionnaire, for example, asks about the use of such “proxy” data including cellphone type, sports affiliations and social club memberships.

Governments around the world are moving to adopt rules and regulations. The European Union has proposed a regulatory framework for A.I. The White House is working on a “bill of rights” for A.I.

In an advisory note to companies on the use of the technology, the Federal Trade Commission warned, “Hold yourself accountable — or be ready for the F.T.C. to do it for you.”

The Data & Trust Alliance seeks to address the potential danger of powerful algorithms being used in work force decisions early rather than react after widespread harms are apparent, as Silicon Valley did on matters like privacy and the amplifying of misinformation.

“We’ve got to move past the era of ‘move fast and break things and figure it out later,’” said Mr. Chenault, who was on the Facebook board for two years, until 2020.

Corporate America is pushing programs for a more diverse work force. Mr. Chenault, who is now chairman of the venture capital firm General Catalyst, is one of the most prominent African Americans in business.

Told of the new initiative, Ashley Casovan, executive director of the Responsible AI Institute, a nonprofit organization developing a certification system for A.I. products, said the focused approach and big-company commitments were encouraging.

“But having the companies do it on their own is problematic,” said Ms. Casovan, who advises the Organization for Economic Cooperation and Development on A.I. issues. “We think this ultimately needs to be done by an independent authority.”

The corporate group grew out of conversations among business leaders who were recognizing that their companies, in nearly every industry, were “becoming data and A.I. companies,” Mr. Chenault said. And that meant new opportunities, but also new risks.

The group was brought together by Mr. Chenault and Samuel Palmisano, co-chairman of the alliance and former chief executive of IBM, starting in 2020, calling mainly on chief executives at big companies.

They decided to focus on the use of technology to support work force decisions in hiring, promotion, training and compensation. Senior employees at their companies were assigned to execute the project.

Internal surveys showed that their companies were adopting A.I.-guided software in human resources, but most of the technology was coming from suppliers. And the corporate users had little understanding of what data the software makers were using in their algorithmic models or how those models worked.

To develop a solution, the corporate group brought in its own people in human resources, data analysis, legal and procurement, but also the software vendors and outside experts. The result is a bias detection, measurement and mitigation system for examining the data practices and design of human resources software.

“Every algorithm has human values embedded in it, and this gives us another lens to look at that,” said Nuala O’Connor, senior vice president for digital citizenship at Walmart. “This is practical and operational.”

The evaluation program has been developed and refined over the past year. The aim was to make it apply not only to major human resources software makers like Workday, Oracle and SAP, but also to the host of smaller companies that have sprung up in the fast-growing field called “work tech.”

Many of the questions in the anti-bias questionnaire focus on data, which is the raw material for A.I. models.

“The promise of this new era of data and A.I. is going to be lost if we don’t do this responsibly,” Mr. Chenault said.